MODERATOR:

Heather R Staines

Independent Consultant

Trumbull, Connecticut

SPEAKERS:

Hannah Drury

Product Manager

eLife/Sciety

Peterborough, United Kingdom

Samantha Hindle

Content Manager

bioRxiv and medRxiv

Cofounder

PREreview

Stefano M Bertozzi

Dean Emeritus and Professor of Health Policy and Management

UC Berkeley School of Public Health

Gunther Eysenbach

CEO and Executive Editor

JMIR Publications

Toronto, Ontario, Canada

REPORTER:

Tony Alves

Hopedale, Massachusetts

The session “Overlay Journals, Overlay Reviews: Has Their Time Finally Come?” was held virtually on May 4, 2021. Moderated by Heather Staines, Senior Consultant at Delta Think, the session featured presentations by Stefano M Bertozzi, Dean Emeritus and Professor of Public Health Policy and Management at UC Berkeley; Gunther Eysenbach, CEO and Executive Editor, JMIR Publications; Samantha Hindle, Content Manager of bioRxiv and medRxiv and Co-founder of PREreview; and Hannah Drury, Product Manager of Sciety at eLife.

COVID-19 has accelerated the use of preprints, and researchers and media are increasingly turning to preprint servers to get an early glimpse at new studies. Preprint servers have come under increased scrutiny, and many have risen to the challenge by implementing various forms of peer review. Another interesting and related phenomenon is the increase in “overlay journals,” which use “overlay reviews” to help validate the science in preprints, thus increasing trust and transparency in preprints. If you are unfamiliar with the concept of overlay journals, they are a type of online, open access compilation of preprints, public domain publications, and already-published open access articles. Sometimes the compilations are thematic, addressing specific topics, and often there is a layer of review and commentary (overlay reviews).

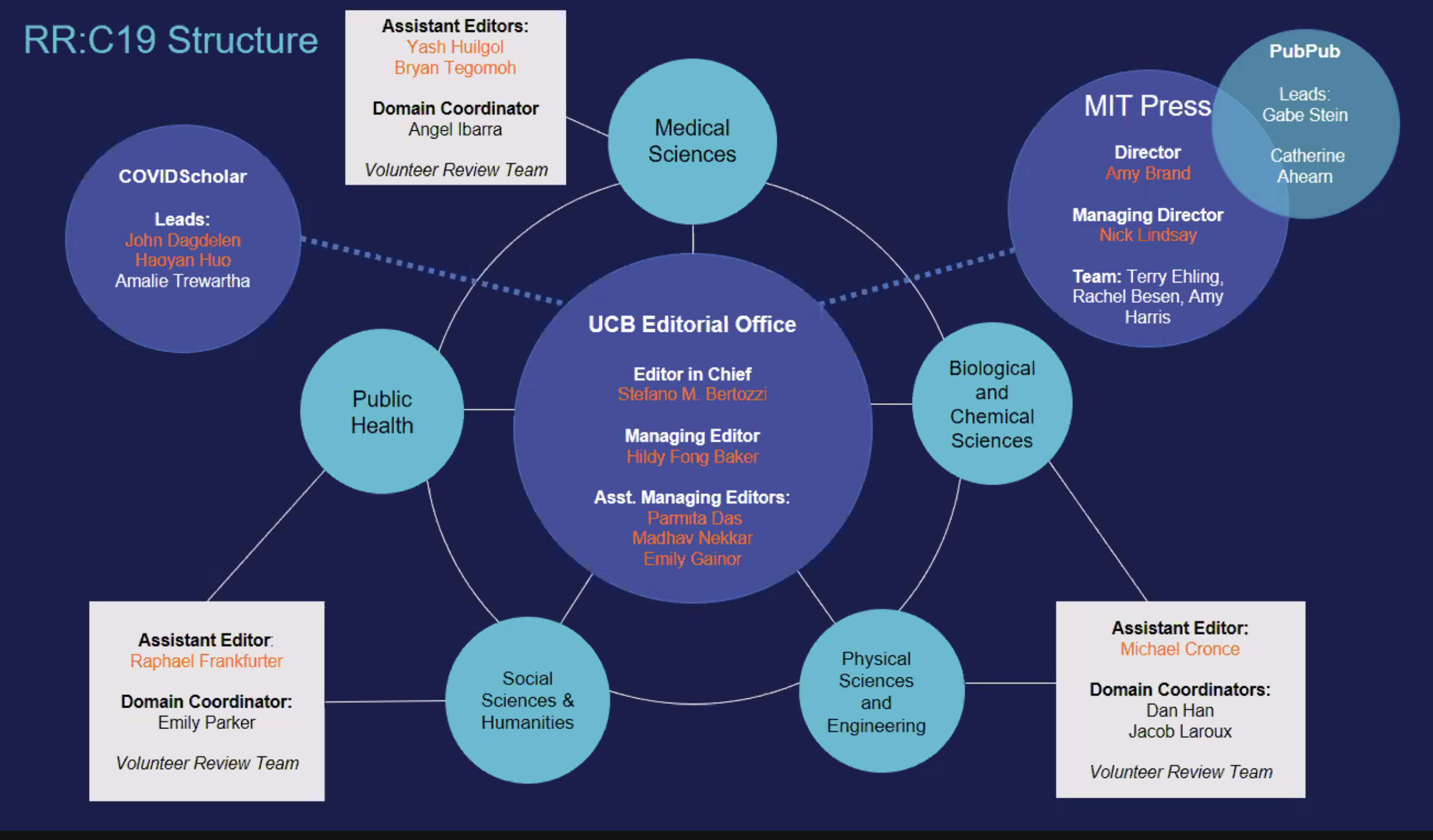

Stefano Bertozzi was the first to present, and he defined overlay reviews as “peer review that is not hosted on the same location as the content.” As the founding editor-in-chief of Rapid Reviews: COVID-19 (rapidreviewscovid19.mitpress.mit.edu),1 also known as RR:C19, published by the MIT Press, Bertozzi pointed to the need to accelerate the peer review of COVID-19 science on preprint servers as the genesis of RR:C19. It is primarily a volunteer endeavor, with an editorial office based at UC Berkeley.

Bertozzi described that process. RR:C19 works with COVIDScholar (covidscholar.org),2 a web-scraping tool that uses natural language processing to gather all the COVID-19–related research available on the Internet. RR:C19 groups COVID-19 preprints into five categories: Medical Sciences, Biological and Chemical Sciences, Physical Sciences and Engineering, Social Sciences and Humanities, and Public Health.

A team of Ph.D. students screens the selected manuscripts, looking for novelty, impact, significance, urgency, and media interest, and they select and sort those manuscripts to be peer-reviewed. Assistant editors manage these different domains and distribute the preprints to peer reviewers with the help of artificial intelligence. The peer reviewers write up reports, which are posted along with links to the preprints on the PubPub platform (https://www.pubpub.org/),3 a project of the Knowledge Futures Group. The entire process is more transparent than regular peer review, especially since authors can respond to the reviews, and traditional journals that are considering publishing the research can also see and utilize those reviews.

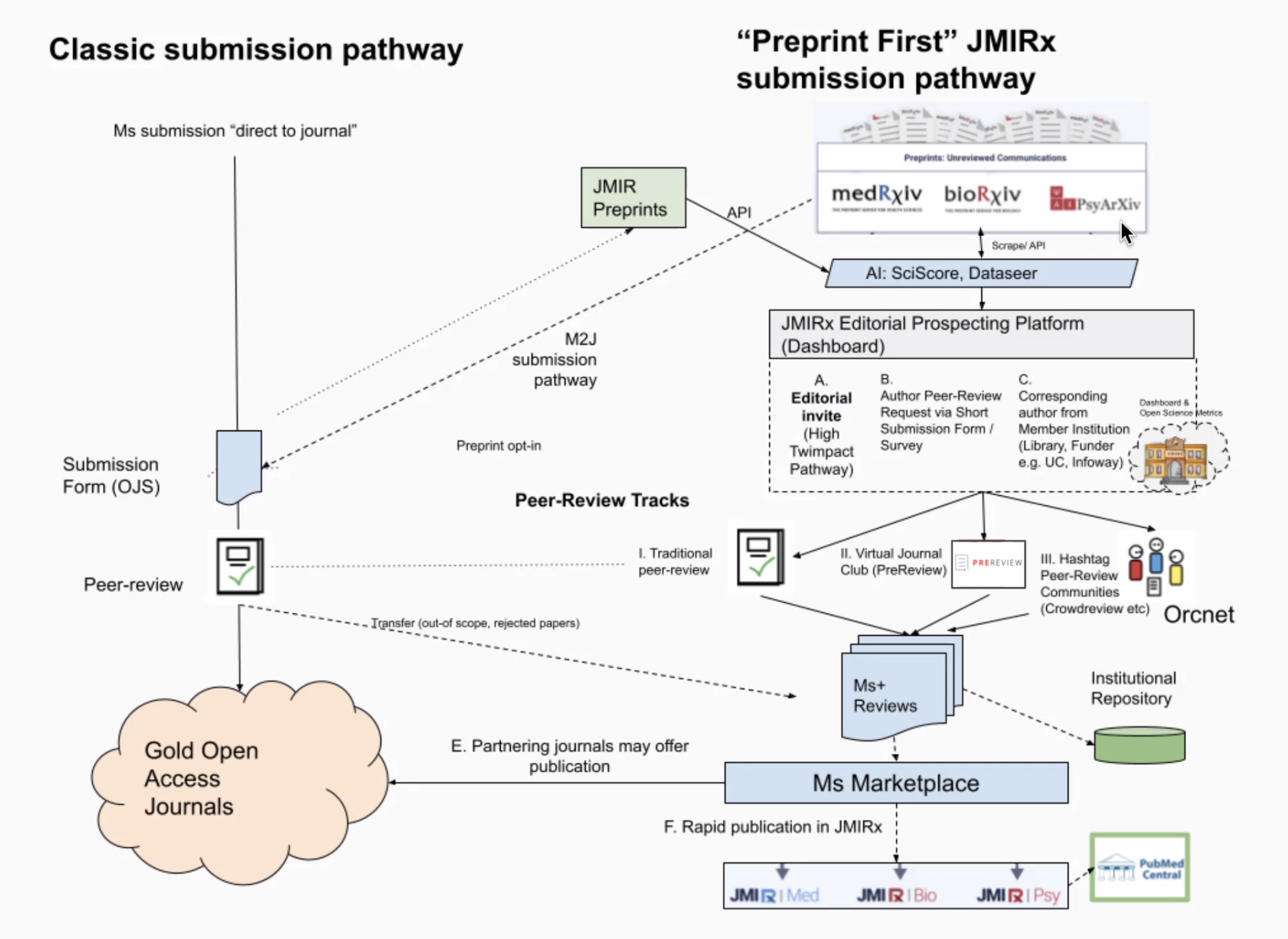

Gunther Eysenbach presented second, introducing the concept of the “superjournal,” which is a type of overlay journal that sits on top of preprint servers. Superjournals offer the same services that traditional journals offer, such as peer review, copyediting, archiving, and indexing. The difference is that authors explicitly opt in to be reviewed and actively revise their preprint manuscript according to reviewer comments. JMIR’s superjournal, JMIRx (jmirx.org/home),4 is the first overlay journal indexed by PubMed and is made up of three sub-superjournals, JMIRx|MED, JMIRx|Bio, and JMIRx|Psy. JMIRx focuses on preprints that have not yet been submitted to a journal for publication.

Eysenbach described that process. JMIRx editors scan through preprints on medRxiv, bioRxiv, and PsyArXiv, looking for interesting and novel research, and extend offers to the authors of the preprints they select using an online survey. The editors then solicit peer reviews, which can take various forms, based on the preference of the author, such as traditional review, crowd-sourced review, or “journal club” review, which is a transcribed conversation held by interested readers. Authors have the opportunity to revise their article on the basis of the feedback and post that article back to the preprint server. Once peer review has been completed, the manuscript is put into the “Ms Marketplace,” where the author can choose to have it published in one of JMIR’s journals or pushed out to a partner journal, where the article might go through additional review. In the end, all manuscripts can be published by JMIRx if they are not picked up by another journal.

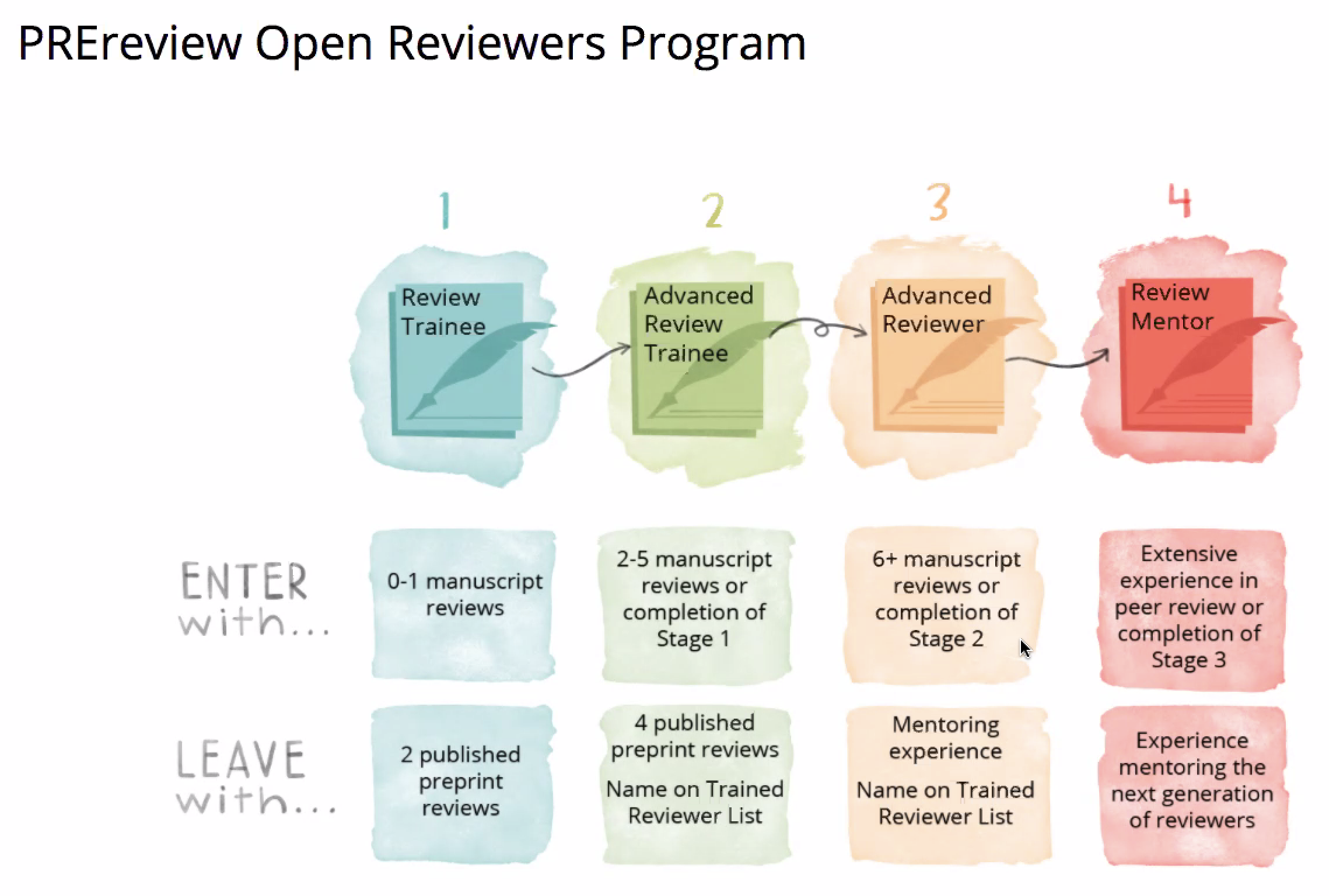

The third presenter, Samantha Hindle, discussed PREreview, which is an initiative that provides tools, training, and support to early-career researchers and underrepresented scholars to build their peer-reviewing expertise, thus empowering them to engage in community review. This training is accomplished with the use of the PREreview platform (www.prereview.org),5 where researchers can individually or collaboratively review preprints, engage in crowdsourced peer review of preprints through facilitated live-streamed preprint journal clubs, and gain support through an interactive four-stage training program called the PREreview Open Reviewers program.

Hindle described the features of the PREreview platform and its two community-building programs: facilitated live-streamed preprint journal clubs and the Open Reviewers program. A user who registers with PREreview can perform several tasks: (1) perform a rapid review using a questionnaire that includes a graphical comparison with other reviews of that article; (2) perform a traditional review independently or with others; (3) comment and endorse other reviews; and (4) report others who have violated the code of conduct. PREreview also provides opportunities for researchers to discuss preprints collaboratively by facilitating live-streamed preprint journal clubs; the output is a collaborative review that can be integrated into the peer-review process and published on the PREreview platform. The Open Reviewers program, which is focused on supporting early-career researchers, consists of both cohort-based and self-guided training. There are four stages of engagement, and reviewers can participate at any stage according to their level of experience.

The 14-week cohort-based program includes interactions between reviewer trainees and mentors, community calls, strategies for evaluating articles, and training as a future mentor. The 8-month self-guided program is for advanced reviewer trainees and includes resources such as videos and written guides. The ultimate goal of the program is to increase diversity in peer review and to train the next generation of socially conscious reviewers.

The fourth and final speaker, Hannah Drury, discussed a new initiative started by eLife called Sciety (sciety.org).6 Similar to PREreview, Sciety’s mission is to build a network of peer reviewers focused on evaluating preprints; and similar to RR:C19, Sciety is facilitating others to perform their own processes however they wish, gathering preprint evaluations from multiple sources in one place. Drury provided a demo of the software, showing how it aggregates reviews from across different preprint review services, including Novel Coronavirus Research Compendium, PREreview, PeerJ, Review Commons, eLife, and Peer Community In. In addition to seeing the aggregated reviews, users can also access different versions of the article, rate the evaluations, and tweet about the article. It is early days for Sciety, and more features and review services are likely to be added in the future.

References and Links