A straightforward description of the Manuscript Exchange Common Approach (MECA) recommendation is that it is a documented methodology describing how to create a package of computer files, and how to transfer the contents of that package in an automated, machine-readable way. The magic of MECA is that it lays out an easy-to-follow map to accomplish this. The MECA specification fully describes how a software system should structure the files, assemble, and then transmit them. However, the what and the how of MECA is not what is most important. The most important thing is the why. The purpose of MECA is to establish a common, easy-to-implement protocol for transferring research articles from one system to another, so that these different systems do not have to develop multiple pairwise solutions for each and every system that they need to talk to.

Having worked on this initiative for the past few years, first as a founding member representing Aries Systems, then as co-chair of the National Information Standards Organization (NISO), Working Group, along with my colleague Stephen Laverick of Green Fifteen Publishing Consultancy, I will describe in this article the genesis of the MECA project and what drove the collaboration, as well as define the components of the MECA protocol and specification. I will also look toward the future and speculate how MECA might evolve.

How and Why It Began

Near the end of 2016, John Sack from HighWire Press contacted Lyndon Holmes, CEO and Founder of Aries Systems, and asked if Aries would be interested in collaborating with other submission system vendors to come up with a common methodology for transferring manuscripts between their varied systems. This wasn’t a surprise considering I had recently seen Sack’s presentation at the STM Frankfurt meeting entitled “Friction in the Workflow: Where Are We Generating More Heat Than Light?”1 where he discussed the frustration faced by authors who find themselves repeating tasks and duplicating efforts during the research evaluation process.

Sack stated, “For one article, authors need to prepare separate submissions with separate rules, forms, formats, and files for each journal they submit to.” He went on to describe how format-neutral submissions is one answer, and how The Genetics Society of America in their journal GENETICS receives submissions. They welcome submissions in any format, and then ask the researcher to follow the submission requirements once they are sure the research will be moving forward.2,3

Sack suggested that a second solution might be an industry-wide adoption of a common submission protocol, much like the “Common App” used by students applying to universities and colleges in the United States, where the student completes a single online form, and then chooses which schools to send it to.4 This would require the development of a central controlling body or clearinghouse for research articles, which would require a large coordination across hundreds of publishers, and is perhaps impractical considering the vast differences in requirements and methodologies for different fields of research. Since most scholarly research flows through 1 of 5 online submission systems, it seemed more practical for those organizations to work together on solving this challenge.

Along with author frustration, reviewer frustration was also cited as a major concern, and something that was a driving force behind the early discussions around the need for a common approach for transferring manuscripts. In a 2016 study published in PLOS ONE,5 it was found that 20% of biomedical researchers performed between 69% and 94% of reviews. The study noted, “Alternative systems of peer review proposed to improve the peer-review system and reduce the burden include ‘cascade’ or ‘portable’ peer review, which would forward the reviews to subsequent journals when papers are resubmitted after being rejected, thus reducing the number of required reviews.”5 Another analysis published in AJE Scholar concluded that “nearly 15 million hours is spent on reviewing rejected papers each year,” and suggests that an “industry standard for portable peer review would reduce the amount of time busy researchers spend reviewing and re-reviewing the same paper.”6 Although there are some good reasons why peer reviews should not be shared (e.g., different journals have different review criteria and different academic focus), there is a desire to have the capability of transferring reviews, and leaving it to the different constituents to determine when it is useful and appropriate.

As the Director of Product Management at Aries Systems at that time, I was quite interested in developing a common approach for transferring manuscripts. Many of Aries’ customers use multiple submission systems, and Aries was already working on several different projects to enable cascading workflows across those systems. In addition, Aries had recently developed a protocol for ingesting manuscripts from preprint servers and authoring systems, which is a similar process with very similar requirements. There were also increasing calls from journals to include aspects of the completed peer review in transferred articles, including the review comments, the editor decision letters, and the authors’ responses to the reviews. We were eager and ready to consider Sack’s request and embark on what turned out to be a very useful and successful initiative.

Details of MECA

The project kicked off with representatives from HighWire Press, Aries Systems, Clarivate, eJournalPress and the Public Library of Science (which was building a submission system at the time). We named the initiative “Manuscript Exchange Common Approach” (MECA). The first thing we did was define our principles and identify the use cases we would be addressing.

The first principle was to let journals and authors set the rules on what is transferred. The MECA team would define what data and files could be transferred, but only minimal data needed to start a submission record would be required. A second principle was to define a minimal viable product in order to get the project off the ground quickly, and to be sure it could be expanded for future use cases. A third principle was to design a protocol based on best practices and industry standards so that there would be a low barrier to entry to use MECA. The fourth principle was that MECA was a technical recommendation or specification, not code or software, not a central hub or service like Crossref or ORCID, and it would not be used to trace the path of a manuscript.

With these principles to guide us, we defined 3 primary use cases: 1) Submission System to Submission System (for cascading workflows and cross-publisher transfers); 2) Preprint System to/from Submission System (in response to author enthusiasm for pre-review distribution of their research); and 3) Authoring System to Submission System (to make it easy for authors to push their research to the journal of their choice). A secondary use case, very broad in scope, was also defined: Submission System to Various Other Systems, such as artificial intelligence/machine learning services, production services, taxonomy services, etc.

The MECA team began to work on a specification to define what a common transfer protocol would look like. The project was broken down into several parts: vocabulary, packaging, manifest, transfer metadata, submission metadata, review metadata, identity, and transmission. These are described below in more detail.

Vocabulary

The goal was to identify a standard nomenclature that provided us with a baseline understanding of how each system uses the language of peer review, and so that any specification would use a common lexicon. For example, the use of referee verses reviewer. The vocabulary list had 70+ terms and included definitions, synonyms, “often-confused-with” alternatives, as well as specific examples of usage. Both publishing terms, like author, reviewer, article, and abstract, and technical terminology, like document type definition (DTD), extensible markup language (XML), interoperability, and mime type, were included. There was an understanding that this list could be updated over time as new terms were introduced.

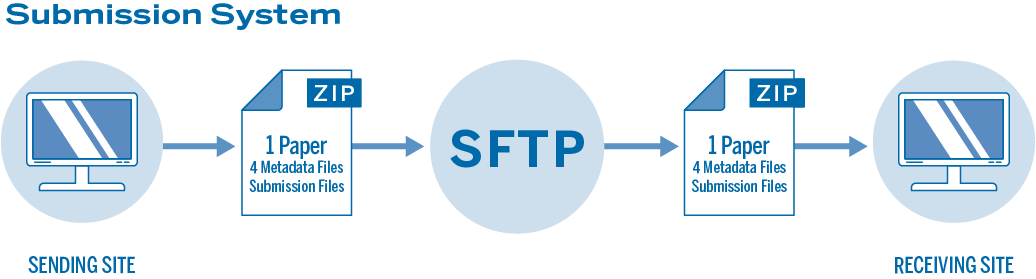

Packaging

The entire group of files to be transferred are wrapped up into a zip file, as this is a simple, flexible, and well-understood way to assemble files for transmission. There is one zip file per manuscript, and the package contains the following files: Manifest.xml (a new DTD for file manifest), Transfer.xml (new DTD identifying the source of the package, the destination of the package, contact and security information), Article.xml (DTD, based on journal article tag suite [JATS], containing information about the article), PeerReview.xml (DTD, based on JATS, containing information about the peer review), and any source files (manuscript, figures, tables, etc.). Only the PeerReview.xml and source files are optional.

Manifest Information

This is an XML file that serves as an inventory of the objects, files, and other data included in the transmission package. As mentioned above, the entire transmission package is a zip file. Each item in the zip package must have an entry in the Manifest.xml file. The manifest file might also include entries for items not in the zip file, such as a URL/URI (such as a pathway to a dataset or video held at a repository). The MECA specification has an example of the Manifest DTD and the Manifest XML file.

Transfer Information

This XML file is used to identify who is sending and who is receiving the package. Typical information to be included in the Transfer.xml are the service provider (submission system, preprint server, etc.), contact information (first name, last name, email address, etc.), and publication information (journal title, etc.). The transfer file may also include security information, such as an authentication code. The MECA specification contains examples of the transfer DTD and XML files as well.

Submission Metadata

Information about the submission itself is contained in the Article.xml file. This XML file is compliant with the JATS Green DTD. The minimum required data are article title and corresponding author; however, the sender can include as much data as they would like, as long as the data complies with the JATS schema. It is up to the receiver to decide how much of the provided data they wish to ingest. For example, the sender might include the required fields plus an abstract, keywords, and funding information. However, if the receiver does not have a corresponding “funding information” field in their system, they would simply ignore that bit of data, or deposit that data in a general use field. It is important to note that only the most recent revision of the submission is written into the Article.xml file.

Peer Review Data

Because the JATS DTD is optimized for conveying article information, it does not currently include any data about the peer review process. This is being addressed by initiatives like JATS for Reuse (JATS4R), but since that is a possible future expansion of JATS, the MECA team had to define a new DTD to convey peer review information. The Reviews.xml file is based on JATS, and can contain peer review data such as questions and answers, comments, ratings, marked up files, and decision letters. Multiple reviews from multiple revisions can be included in 1 file. Because peer review is often anonymized, there is an accommodation to redact reviewer names and contact information based on the sending journal’s privacy policy. One question that has come up often is, “why not include the peer review information in the Article.xml?” The MECA team felt that it would be best to keep the Article.xml file fully JATS compliant.

Identity

It was realized early on that a manuscript might be transferred multiple times, and that it would be useful if the package had a consistent identity across systems so that a system would know if it had been transferred or received by that system in the past. Multiple identifiers exist today, such as manuscript number and digital object identifier (DOI), but those identifiers already have specific uses. Therefore, the MECA team decided that a universally unique identifier (UUID) methodology should be used. The UUID is a 128-bit number that when generated, will, for all practical purposes, be unique. It does not require any central controlling authority and has no semantic meaning.

Transmission

Perhaps the most controversial of the decisions made by the MECA team was to use secure fiel transfer protocol (SFTP) to transmit the package from system to system. SFTP is a longstanding and very common way for computer servers to send and receive files over the Internet. SFTP was chosen because it is well established, and most systems will be able to utilize it. Another benefit is that SFTP works well when sending large files (such as image and data files), and if interrupted, it can easily resume the file transfer. However, it is also recognized that supporting an application programming interface (API) transmission (such as REST or SWORD) is likely to be one of the first improvements because API technology is widespread and has additional advantages, such as real time status messaging.

NISO Gets Involved

As the specification was being written, members of the MECA team began to promote the concept throughout the scholarly publishing community. There were articles in the Scholarly Kitchen7 and in the Naturejobs blog.8 There were also presentations at meetings such as the Society for Scholarly Publishing,9 Council of Science Editors, Force11, STM Week, and JATS Con. This captured the attention of the National Information Standards Organization (NISO), who invited MECA to form a NISO Working Group10 in order to make the MECA protocols an official NISO Recommended Practice.

Along with the original MECA members, the Working Group was expanded to include the following representatives from across scholarly publishing: the American Chemical Society, the American Physical Society, Cold Spring Harbor, eLife Sciences, Green Fifteen Publishing Consultancy, IEEE, Jisc, the National Library of Medicine, Springer Nature, and Taylor & Frances. The Working Group spent several months revisiting and revising the original specification, building on the work that had already been done.

The MECA Recommended Practice was approved on June 26, 2020, and published on July 6, 2020.11,12 A NISO Recommended Practice is defined as a “recommended ‘best practice’ or ‘guideline’ for methods, materials, or practices in order to give guidance to the user. Such documents usually represent a leading edge, exceptional model, or proven industry practice. All elements of Recommended Practices are discretionary and may be used as stated or modified by the user to meet specific needs.”

Ultimately the MECA Recommended Practice can be seen as a successful collaboration with stakeholders from various areas of the publishing ecosystem, which provides a framework for manuscript exchange with low barriers to entry. As with the initial recommendations, the Working Group recognized that there is still work to do, and as such, many of the participants have committed to working together to evolve the recommended practice. A NISO Standing Committee has been formed and includes the following participants: the American Chemical Society, the American Diabetes Association, Apex, Aries Systems, California Digital Library, Clarivate, Cold Spring Harbor, eLife Sciences, Green Fifteen, IEEE, the National Library of Medicine, Overleaf, Public Knowledge Project, Public Library of Science, River Valley, Scholastica, and Taylor & Francis.

The NISO MECA Standing Committee meets monthly and will take up the following activities: promotion and education of the current Recommended Practice; evolution of the specification to include updated protocols and technology; non-English language support; integration with efforts by JATS4R, STM Review Taxonomy, and DocMaps initiatives; and support of additional use cases.

As a founding member, and then as co-chair of both the Working Group and the Standing Committee, it has been an honor and a privilege to work with so many amazing and talented people from across the scholarly publishing industry. What started out as an initiative to help commercially-focused submission system vendors collaborate more efficiently, has turned into a cross-industry effort of commercial, nonprofit, professional society, and governmental agency cooperation that will benefit researchers by removing friction in the research evaluation process and making the flow of scholarly knowledge smoother and faster. In order for MECA to be fully effective it needs widespread adoption, and to that end I request that editors and publishers ask their system vendors if they have already adopted MECA or if they plan to adopt it. If the answer is no, then point them to the MECA website (https://www.manuscriptexchange.org) and to the NISO Recommended Practice (https://www.niso.org/publications/rp-30-2020-meca).

References and Links

- https://www.stm-assoc.org/events/stm-frankfurt-conference-2016/

- http://zeeba.tv/media/conferences/ape-2016/0101-John-Sack/

- Sack J. Friction in the scholarly workflow: obstacles and opportunities. Inform Serv Use. 2017;37:17–22. https://doi.org/10.3233/ISU-170823.

- https://www.commonapp.org/

- Kovanis M, Porcher R, Ravaud P, Trinquart L. The global burden of journal peer review in the biomedical literature: strong imbalance in the collective enterprise. PLOS ONE. 2016;11:e0611387. https://doi.org/10.1371/journal.pone.0166387.

- https://www.aje.com/arc/peer-review-process-15-million-hours-lost-time/

- https://scholarlykitchen.sspnet.org/2018/07/25/guest-post-manuscript-exchange-meca-can-academic-publishing-world-cant/

- http://blogs.nature.com/naturejobs/2018/06/11/resubmitting-your-study-to-a-new-journal-could-become-easier/

- https://www.youtube.com/watch?list=PLeXrNQ3rZI7jB0bf_Tl9wmNg2MNklP_Il&v=ZEAQjrURy4g

- https://www.niso.org/press-releases/2018/05/niso-launches-new-project-facilitate-manuscript-exchange-across-systems

- https://www.niso.org/standards-committees/meca

- https://www.niso.org/publications/rp-30-2020-meca

Tony Alves (0000-0001-7054-1732; @OccupySTM; https://www.Tonyhopedale.com) is Co-Chair, NISO MECA Standing Committee.